AI Reliability: The Missing Piece in Production Deployment

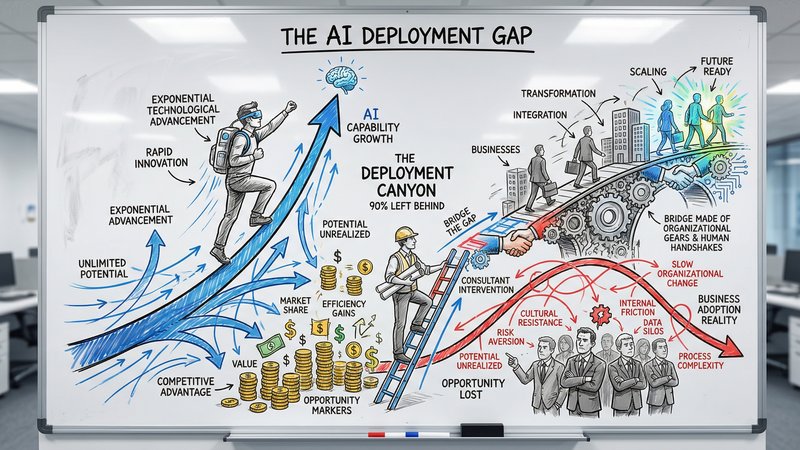

Only 31% of AI use cases reach production. The gap is not capability, it is reliability. Here is how to build AI systems your team and clients can actually trust.

The Production Gap Nobody Talks About

Almost nobody is talking about whether AI works reliably enough to trust with real client work.

Here is the uncomfortable reality: only 31% of AI use cases reach full production. The outputs are not reliable enough to deploy without constant human babysitting. And babysitting AI at scale costs more than doing the work manually.

For B2B service businesses, this is not an abstract problem. When an AI-generated proposal contains a fabricated statistic, when an automated report cites a source that does not exist, when a chatbot gives a client confidently wrong information, the damage is immediate. Your reputation takes the hit, not the tool’s.

The gap between “AI can do this in a demo” and “AI does this reliably every Tuesday at 9am” is where most businesses get stuck. Closing that gap is the difference between a tool that collects dust and a system that actually runs part of your business.

Why AI Fails in Production

AI unreliability is not random. It follows predictable patterns, and understanding those patterns is the first step to building systems you can trust.

Hallucinations are still the primary risk

AI models generate plausible-sounding content that is factually wrong. This is not a bug that will be patched. It is a fundamental characteristic of how large language models work. They predict the next likely word based on patterns, not based on truth.

The scale of this problem is significant. Over 120 cases of AI-driven legal hallucinations have been identified since mid-2023, with at least 58 occurring in 2025 alone. One case resulted in a €29,000 penalty. In healthcare, manufacturing, and financial services, hallucinated outputs carry even higher stakes.

For service businesses, the risk is more subtle but equally damaging. A proposal that includes a “statistic” the AI invented. A client email with a recommendation based on a hallucinated precedent. A financial summary with numbers that look right but are not. Nearly 4 in 10 executives have made incorrect decisions based on AI outputs that sounded correct but were not.

Context window failures

AI models have a limited amount of information they can process at once. When you feed in a long client brief, a 50-page contract, or months of CRM data, the model may silently drop critical details. It will not tell you it missed something. It will just generate a response that looks complete but is not.

This is especially dangerous for service businesses handling complex, multi-step client work. The AI output looks professional and thorough. But it is missing the one detail from page 37 that changes everything.

Consistency drift

The same prompt given to the same model on Monday and Thursday can produce different outputs. For one-off creative tasks, this is fine. For production workflows that need predictable, repeatable results, it is a serious problem.

When your automated client onboarding process sends slightly different welcome messages every time, or your AI-generated reports vary in structure and tone week to week, you lose the consistency clients expect from a professional service.

What Reliable AI Deployment Actually Looks Like

The businesses that get AI to production share a set of practices that have nothing to do with choosing the right model. They are operational patterns that make any model more reliable.

1. Never deploy AI as a final output

This is the foundational rule. AI generates first drafts. Humans review, edit, and approve before anything reaches a client.

This is the architecture that makes AI useful. The first draft that took two hours now takes two minutes. The review takes fifteen. Net time saved: over an hour. Quality stays the same or improves because the human reviewer is working from a structured starting point instead of a blank page.

The businesses that fail at AI deployment are almost always the ones that tried to remove the human from the loop entirely. The ones that succeed keep the human in the loop but move them from production to quality control.

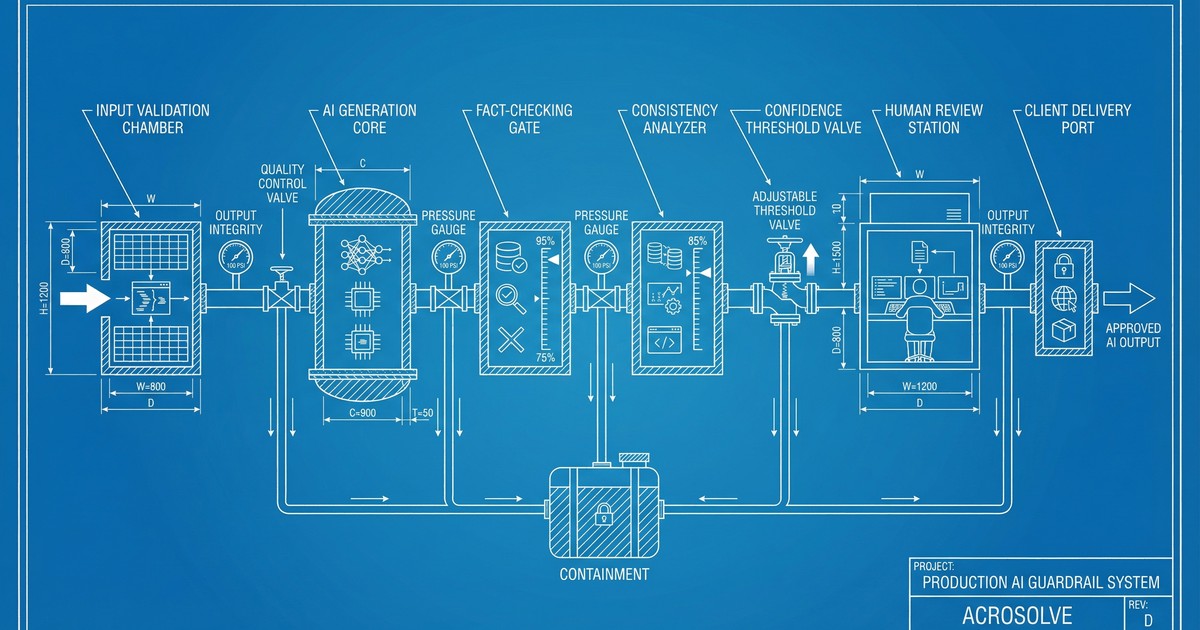

2. Build guardrails before you build features

Before you deploy an AI workflow, define what “wrong” looks like and build checks for it.

For a proposal automation workflow, that means:

- Fact-checking gates: Does the output reference real statistics? Cross-check against a source database.

- Consistency checks: Does the output follow the approved template structure? Flag deviations.

- Confidence thresholds: If the AI is uncertain about a section, flag it for human review instead of guessing.

- PII detection: Scan outputs for personal data that should not be included.

Enterprise tools now check model outputs for hallucination, relevance, and bias. But you do not need enterprise tooling to start. A simple checklist that your team runs before sending any AI-generated content is a guardrail. The sophistication can grow with scale.

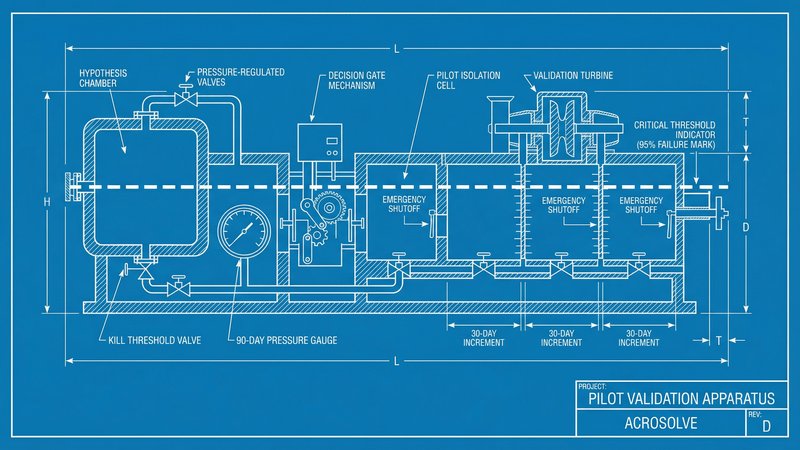

3. Scope narrowly, then expand

The most reliable AI systems do one thing well, not ten things mediocrely.

Instead of “AI handles all client communication,” start with “AI drafts the initial project scope email based on the intake form.” That single, narrow scope lets you:

- Test edge cases thoroughly

- Build specific guardrails for that workflow

- Measure reliability with clear metrics

- Build team trust through consistent results

Once that one workflow is running reliably for a month, expand to the next. This is slower than deploying AI everywhere at once, but it is the approach that actually reaches production. The alternative, deploying broadly and hoping for the best, is what produces the 69% failure rate. For a complete framework on how to structure this, see why your AI project needs a pilot, not a roadmap.

4. Monitor outputs, not just uptime

Traditional software monitoring checks: is the system running? AI monitoring needs to check: is the system producing good results?

This means tracking:

- Output quality over time. Are the AI-generated drafts getting better or worse? Is accuracy trending in the right direction?

- Human correction rate. What percentage of AI outputs require significant edits before they are client-ready? If that number is going up, something changed.

- Edge case frequency. How often does the AI produce something obviously wrong? Log every failure, even minor ones. Patterns in failures reveal systemic issues.

89% of organizations with production AI have implemented some form of observability. For a service business, this does not need to be a complex monitoring dashboard. A shared log where team members note when AI produced something wrong, and what the correction was, gives you the data you need to improve the system over time.

The Trust Equation

Reliability is a trust problem as much as a technical one. And trust operates differently for AI than for human team members.

When a human on your team makes a mistake, you correct them and move on. You trust that they learned. When AI makes a mistake, the instinct is to stop trusting it entirely. One hallucinated statistic in a proposal, and the whole team goes back to writing manually.

This all-or-nothing response is natural but counterproductive. The practical approach is to treat AI like a junior team member: capable, fast, but needs supervision. You would not give a new hire unsupervised access to client deliverables on day one. You should not give AI unsupervised access either.

Over time, as the system proves reliable in a specific workflow, you can reduce the oversight. But you never remove it entirely. Even the most reliable AI systems need periodic human review, the same way even your best employee’s work gets a second pair of eyes on important deliverables.

The Competitive Angle

In 2026, competitive advantage will come from governing AI well. The businesses that maintain visibility, clear ownership, and rapid intervention when something goes wrong are the ones that earn trust, from their teams and from their clients.

Vendors are moving beyond “black box” assistants to transparent, audit-ready AI that can link back to exact sources. But you do not need to wait for perfect tooling to start deploying reliably. The patterns above work with any model, any tool, any budget.

The businesses winning with AI right now figured out how to make it reliable enough to trust with real work.

Where to Start

Three things you can implement this week:

-

Establish the “no unsupervised output” rule. Every AI-generated deliverable gets human review before it reaches a client. No exceptions. This single rule prevents 90% of reliability failures.

-

Pick one workflow and define “wrong.” What would a bad output look like for your most common AI use case? Write those failure modes down. They become your guardrails.

-

Start a correction log. When anyone on the team fixes an AI output, note what was wrong and what the fix was. After two weeks, you will have a clear picture of your system’s actual reliability and exactly where to improve it.

Want to know which of your workflows are ready for reliable AI deployment? Take the AI Readiness Assessment and we will show you where to start, with reliability built in from day one.

Thom Hordijk

Founder

Get posts like this in your inbox every week

Weekly insights on AI and automation for B2B service businesses. No hype, just what works.

Related Articles

View all articles

90% of Businesses Don't Use AI. That's the Opportunity.

90% of US businesses still don't use AI in production. The gap between capability and deployment is where the real opportunity lives for service businesses.

The Dark Side of Vibe Coding for Businesses

One year after the birth of vibe coding, the security incidents are adding up. Learn why speed without safety puts your business data at risk.

Why Your AI Project Needs a Pilot, Not a Roadmap

95% of AI pilots deliver zero P&L impact. The problem is not AI. It is big-bang transformation. Here is how to run a 90-day pilot that actually scales.