The Dark Side of Vibe Coding for Businesses

One year after the birth of vibe coding, the security incidents are adding up. Learn why speed without safety puts your business data at risk.

What One Year of Vibe Coding Has Taught Us

Exactly one year ago, on February 3, 2025, Andrej Karpathy introduced a term that shaped how people talk about AI development. The former Tesla AI director and OpenAI founding member described “a new kind of coding” where you “fully give in to the vibes” because AI tools “are getting too good.”

The term caught on. Collins Dictionary named “vibe coding” the Word of the Year for 2025. Developers started letting AI write entire applications based on loose descriptions. No specifications. No tests. Just vibes.

Twelve months later, security researchers have documented dozens of critical vulnerabilities in vibe-coded applications. Real businesses have had customer data exposed. Karpathy himself now advocates for a different approach he calls “agentic engineering.” Okay, maybe that’s something for another article..

In October we wrote about what vibe coding means for business owners and why it creates real opportunities for small businesses. This article is the other side of that coin.

Let’s focus on the risks of vibe coding, why it matters for your business, and how to use AI in development without the security risks.

Speedboat vs Cruise Ship: The Risk Trade-off

Large corporations prioritize not making mistakes. Every decision requires approval chains. Every new technology needs a security review. Every project has a compliance checklist. This is deliberate. A data breach at a multinational means regulatory investigations, class action lawsuits, and front-page news coverage. So they build processes that focus on risk mitigation above all else.

Smaller businesses operate differently. You can try new tools without a six-month procurement process. You can adapt quickly and beat larger competitors to market. Think of it as a speedboat versus a cruise ship. The cruise ship prioritizes stability and safety. The speedboat prioritizes agility and speed.

That agility is a genuine competitive advantage. But it comes with a trade-off: smaller businesses often focus less on risks. When you move fast, security checks get skipped. Code reviews get shortened. Testing gets minimal.

Vibe coding amplifies this trade-off. It makes the speedboat faster, but it also makes the hull thinner. The question is whether you are building something that can handle rough water.

Where Vibe Coding Actually Works

Before we discuss the risks, let me be clear: vibe coding has legitimate uses.

Proof of concept development. Testing whether an idea is worth pursuing before investing significant resources.

Market validation. Getting something in front of users fast to see if they actually want it.

Internal tools. Quick scripts that only your team uses, with no customer data involved.

Learning and exploration. Understanding what is possible before committing to a full implementation.

These are low-stakes environments. The code might be messy. The security might be minimal. But the blast radius is small. If something breaks, you fix it. No customer data is compromised. No regulations are violated.

The problems start when this approach gets applied to systems that handle real customer data, process payments, or manage sensitive business information. And that is exactly what has been happening over the past year.

Security Incidents from the Past Year

The past twelve months have produced a growing list of security incidents tied to AI-generated code shipped without proper review. These are documented breaches affecting real users, not hypothetical scenarios.

ClawdBot/Moltbot: Thousands of Vulnerable Bots

In late January 2026, just two weeks ago, a new AI bot framework called ClawdBot went viral on social media. Developers loved it. Within days, thousands had deployed their own instances.

Security researchers quickly discovered critical vulnerabilities:

Exposed admin panels. Anyone could access the management interface without authentication.

Plaintext credential storage. API keys, user profiles, and conversation histories were stored in readable Markdown and JSON files. No encryption. No access controls.

Authentication bypass. A flaw in how the gateway handled localhost connections allowed external attackers to bypass login protections when deployed behind common reverse proxies like Nginx.

Supply chain compromise. A proof-of-concept attack on ClawdHub showed how a malicious skill could be uploaded, its download count artificially inflated, and developers from seven countries would download the poisoned package.

When the project was renamed to Moltbot following legal concerns, scammers immediately grabbed the abandoned @clawdbot handles on X and GitHub.

Basic security practices would have prevented every one of these vulnerabilities. Authentication, encrypted storage, input validation. Standard practices that got skipped in the rush to ship.

Base44: The “Private” Applications That Were Not Private

In July 2025, security researchers discovered a vulnerability in Base44, a platform for building applications with AI. The platform had a privacy toggle that let users mark their applications as “private.”

The problem: the privacy toggle was cosmetic. There was no actual access control behind it.

Unauthenticated attackers could access any “private” application on the platform. The AI-generated code checked whether an application was marked private in the user interface. It did not check whether the person requesting access actually had permission.

This is a pattern we see repeatedly. Security requires understanding what “private” actually means in terms of system behavior, not just interface labels.

The December 2025 Study

A systematic study in December 2025 examined 15 applications built primarily with AI code generation. The researchers found 69 vulnerabilities across those 15 applications.

Roughly half a dozen were rated critical. The common patterns were predictable:

- No authentication or authorization

- Reading or changing a database (SQL injection)

- Hardcoded secrets and credentials in the codebase

- Give admin functionality to public users

These are not new vulnerability types. They are the same security failures that have plagued software for decades. AI code generation made them faster to produce.

Why This Keeps Happening

The 90% Accuracy Problem

AI code generation is remarkably good at producing working code. For many prompts, it might be accurate 90% of the time. That sounds impressive until you chain multiple steps together.

Consider a workflow with three steps, each 90% accurate:

- Step 1: 90% accuracy

- Step 2: 90% accuracy

- Step 3: 90% accuracy

- Combined accuracy: 90% × 90% × 90% = 73%

Every additional step compounds the error rate. A 10-step workflow with 90% accuracy per step succeeds only 35% of the time. And those failures are often silent. The code runs. It just does the wrong thing.

Invisible Technical Debt

Technical debt is a well-understood concept in software development. You cut corners now and pay interest later in the form of maintenance costs and bug fixes.

Vibe coding creates a new variant: invisible technical debt. The code works, so nobody reviews it closely. The security gaps exist, but they are not obvious from the output. Everything functions correctly until someone malicious finds the vulnerability.

Security Is Not a Feature You Add Later

Many developers treat security like a coat of paint. Build the house first, then secure it later. But security is architectural. It needs to be designed into the foundation.

Retrofitting authentication and access controls into an existing system is dramatically more expensive than building them correctly from the start. And during the gap between deployment and remediation, your customer data is exposed.

The Business Cost Calculation

Consider the numbers:

- Average cost of a data breach for small businesses: €120,000-200,000

- Regulatory fines under GDPR: up to 4% of annual turnover

- Reputation damage: incalculable, often fatal for service businesses

- Customer lawsuits: legal fees plus settlements

Compare those numbers to the cost of proper code review before deployment. The economics are clear.

What Enterprise Clients Actually Need

After 8 years of solving technology problems for medium and large enterprises, I have seen what these organizations require. Their processes exist for a reason: they have experienced what happens when systems fail at scale.

Here is what enterprise clients actually require:

Deterministic systems. Business logic should produce the same output for the same input, every time. Probabilistic guessing is not acceptable for invoicing, contracts, or compliance. When a calculation is wrong, you need to know exactly why.

Audit trails. Who did what, when, and why. When something goes wrong, whether through error or malice, you need to trace the decision back to its source. This is not optional for regulated industries.

Human-in-the-loop. Critical decisions require human approval. The system can recommend, but a person must confirm. No automated system should be able to send funds, delete records, or grant access without human verification.

Permission-based access. Not everyone should see everything. Meeting transcripts, financial data, and customer information need proper access controls. An intern should not have the same system access as the CEO.

How to Adopt AI Without the Risks

The path forward is using AI responsibly.

For Prototypes and Experiments

Use vibe coding freely for throwaway code. That is what it is good for. But follow these rules:

- Never connect prototypes to real customer data

- Label experimental systems clearly so everyone knows their status

- Have an explicit graduation process before going to production

- Treat the prototype as a specification, not as production code

For Production Systems

Code review is non-negotiable. Every AI-generated code block needs human review before deployment.

Focus your review on:

- Authentication: Does every endpoint verify the user’s identity?

- Authorization: Does every action check whether the user has permission?

- Input validation: What happens with unexpected or malicious input?

- Error handling: Do errors reveal sensitive information?

Ask yourself: “What happens if someone malicious uses this?” If you cannot answer that question, the code is not ready for production.

Test for the obvious vulnerabilities:

- Can unauthenticated users access protected routes?

- Are credentials hardcoded anywhere in the codebase?

- What happens when someone enters SQL syntax into text fields?

- Are admin functions exposed to regular users?

Separate concerns. Keep business logic in deterministic code. Use AI for routing and decision support. Never let AI execute arbitrary code without proper sandboxing.

For Vendor Selection

When evaluating AI-powered tools or hiring developers who use AI:

- Ask about their testing process

- Ask how they handle user permissions

- Ask what happens to your data

Red flag: “The AI handles all of that automatically.”

The Shift to Agentic Engineering

It is telling that Andrej Karpathy, who coined “vibe coding,” now advocates for “agentic engineering.” The shift in terminology is deliberate.

Vibe coding means giving in to the vibes, letting AI handle it, hoping for the best. Agentic engineering means designing systems where AI agents operate within defined boundaries, with proper oversight and control.

The industry is maturing. The early excitement about AI-generated code is giving way to a more nuanced understanding. Yes, AI dramatically accelerates development. No, that does not mean you skip the engineering discipline that makes software safe and reliable.

The competitive advantage goes to businesses that adopt AI responsibly:

- Faster than businesses that avoid AI entirely

- Safer than businesses that adopt AI without guardrails

The winner is the fastest boat that stays afloat.

Moving Forward

The core lesson from this past year is simple: building a prototype quickly does not mean you have a production-grade application. Vibe coding can get you to a working demo in hours. It cannot get you to secure, reliable software without the engineering work that has always been required.

Use vibe coding for what it does well: exploring ideas, validating concepts, building internal tools. But when customer data, payments, or business-critical operations are involved, treat AI-generated code as a starting point, not a finished product.

Want to see how your business can adopt AI automation safely? Schedule a free AI Readiness Assessment and we will map out an approach that delivers speed without the security risks.

Thom Hordijk

Founder

Get posts like this in your inbox every week

Weekly insights on AI and automation for B2B service businesses. No hype, just what works.

Related Articles

View all articles

Why Security Is the Real AI Adoption Bottleneck

Most AI adoption stalls on security concerns, not cost or talent. Learn how to address data privacy, GDPR, and AI security for business the right way.

Is AI Finally Built for Small Businesses?

AI used to demand enterprise budgets and IT teams. That's changed. Here's what's different now and how to tell if it's right for your business.

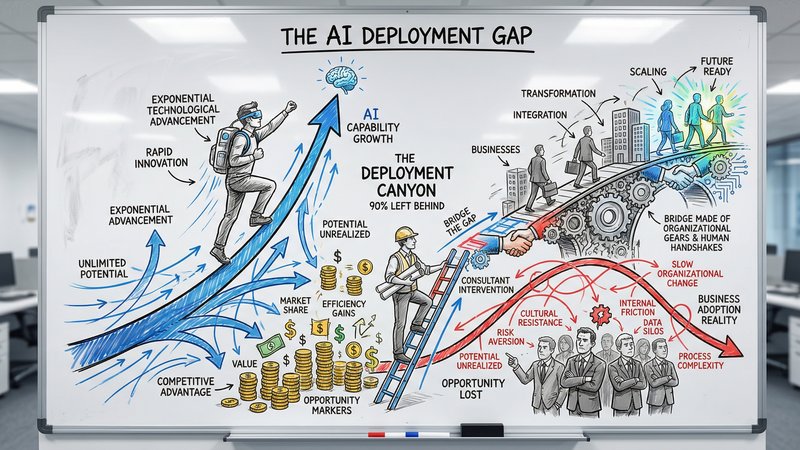

90% of Businesses Don't Use AI. That's the Opportunity.

90% of US businesses still don't use AI in production. The gap between capability and deployment is where the real opportunity lives for service businesses.