Why Your AI Project Needs a Pilot, Not a Roadmap

95% of AI pilots deliver zero P&L impact. The problem is not AI. It is big-bang transformation. Here is how to run a 90-day pilot that actually scales.

The Lesson Nobody Wanted to Learn

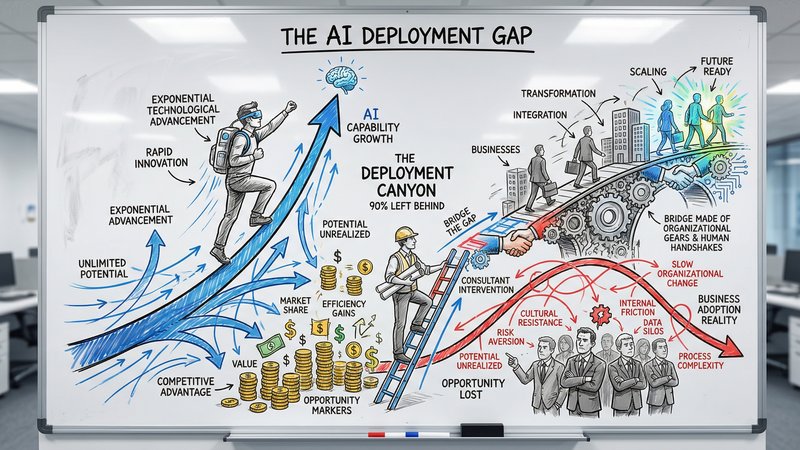

In 2024, enterprises collectively invested €28-37 billion in generative AI pilots. The vast majority of that delivered zero measurable return.

That number should give pause to every business owner sitting across from a vendor pitching a 12-month AI transformation roadmap. The problem was not the technology. The problem was the approach.

MIT’s NANDA Institute published a study in July 2025 that quantified this. They interviewed 52 executives, surveyed 153 AI leaders, and analyzed over 300 public deployments. Their conclusion: 95% of corporate GenAI pilots delivered zero measurable impact on the P&L. Not disappointing results. Zero.

BCG’s research from October 2024 paints the same picture from a different angle. 74% of companies struggle to achieve and scale AI value. Only 4% generate substantial returns. The rest are stuck somewhere between “we have an AI strategy” and “we have AI results.”

The instinct for most businesses seeing these numbers is to conclude that AI does not work. That is the wrong lesson. The 5% who succeed are not using better AI. They are using a fundamentally different approach to deploying it.

Why Big Transformation Plans Fail

The standard playbook for AI adoption goes like this: hire a consultancy, run a multi-month assessment, build a comprehensive roadmap covering every department, allocate a large budget, and execute over 12-18 months.

By month six, the technology landscape has shifted. The models you evaluated are outdated. Half the use cases on the roadmap no longer make sense. The team that was supposed to drive adoption is burned out from the planning phase. And you have spent significant money on strategy documents that sit in shared drives.

BCG identified why this happens with their 10-20-70 rule: 70% of AI transformation challenges are people and process. 20% are technology. Only 10% are the algorithm. The roadmap addresses the 10%. The 90% that actually determines success is organizational change, and organizational change does not respond to Gantt charts.

We see this pattern in our own conversations with service businesses.

Scope creep kills momentum. A prospect comes to us wanting to automate proposal writing. By the second meeting, the scope has expanded to “redesign the entire sales workflow.” We push back. Start with the proposals. Prove it works. Then expand.

Data prep is underestimated every time. One company we spoke with had client information scattered across Monday.com, email threads, and spreadsheets. Nobody had the full picture. Before any AI could help, their data needed organizing. This consumes 60-80% of actual AI project work, but most budgets only allocate 20-30% to it.

One bad experience creates organizational antibodies. 42% of companies abandoned most AI initiatives in 2025, up from 17% in 2024. We regularly talk to businesses where the team already tried AI with a freelancer or a rushed experiment. It did not work. Now the response to every AI suggestion is: “We tried that.” This pattern is why 88% of AI implementations fail, and it is almost always a people problem, not a technology one.

McKinsey called it having “more pilots than Lufthansa.” Disconnected experiments visible everywhere except the income statement.

The Pilot Alternative

The businesses in the 5% who generate real returns from AI share a common pattern: they started small, measured relentlessly, and scaled only what worked.

A separate study analyzing 200 B2B deployments across European SMBs, published in late 2025 by French AI consultancy ENDKOO, found that projects with smaller initial budgets (under €15,000) achieved 2.1 times higher ROI than large-scale deployments. The median break-even was 8 months, with median ROI of 160% over 24 months.

This is not a marginal difference. Spending less upfront more than doubled the return.

The reason is straightforward. A small pilot forces clarity. You cannot automate “everything” in 90 days. You have to pick one specific problem, define exactly what success looks like, and prove value before spending more. That constraint is the feature, not the limitation.

Real Examples

Guardian Life Insurance (US) piloted AI on their RFP and quoting process. The result: a workflow that took 7 days was reduced to 24 hours. One process, one team, measurable outcome.

Osborne Clarke, a European law firm, piloted AI time capture with a small group of users. Each user recovered 1.5 hours per week, and over 70% reported surfacing missed billable time. The pilot proved value before the firm committed to a wider rollout.

An accounts payable team spent €450 on a pilot to automate invoice data entry. The team had been spending 20 hours per week on manual processing. By day 60, the pilot hit its target of 75% reduction. That single investment permanently saves 15 hours every week.

Ecosio, an HR platform, piloted AI for payroll processing. The result: 75% reduction in processing time, 706% ROI in under three months.

Notice the pattern. Each of these started with one workflow, one team, and a clear metric. None started with a roadmap.

The 90-Day Pilot Framework

A good AI pilot has seven non-negotiable components. Skip any of them and you join the 95%.

1. A specific problem, not an AI strategy

“Implement AI across the organization” is not a pilot. “Reduce proposal writing time from 4 hours to 1 hour for the sales team” is a pilot.

The problem should be painful enough that the team is motivated to solve it. Proposal writing, invoice processing, client onboarding, meeting follow-ups, report generation. Pick the task your team dreads most. The pain drives adoption.

This matches what we hear in sales conversations. A creative agency spending hours on manual proposals for every new client. A consultancy whose CRM only captures warm prospects because nobody has time to enter the rest. A services firm drowning in follow-up emails after every client meeting. These are specific, measurable problems. They make good pilots.

Evaluate candidates on data availability, business impact, stakeholder support, and measurability. If you cannot measure the current state with a number, you cannot measure improvement.

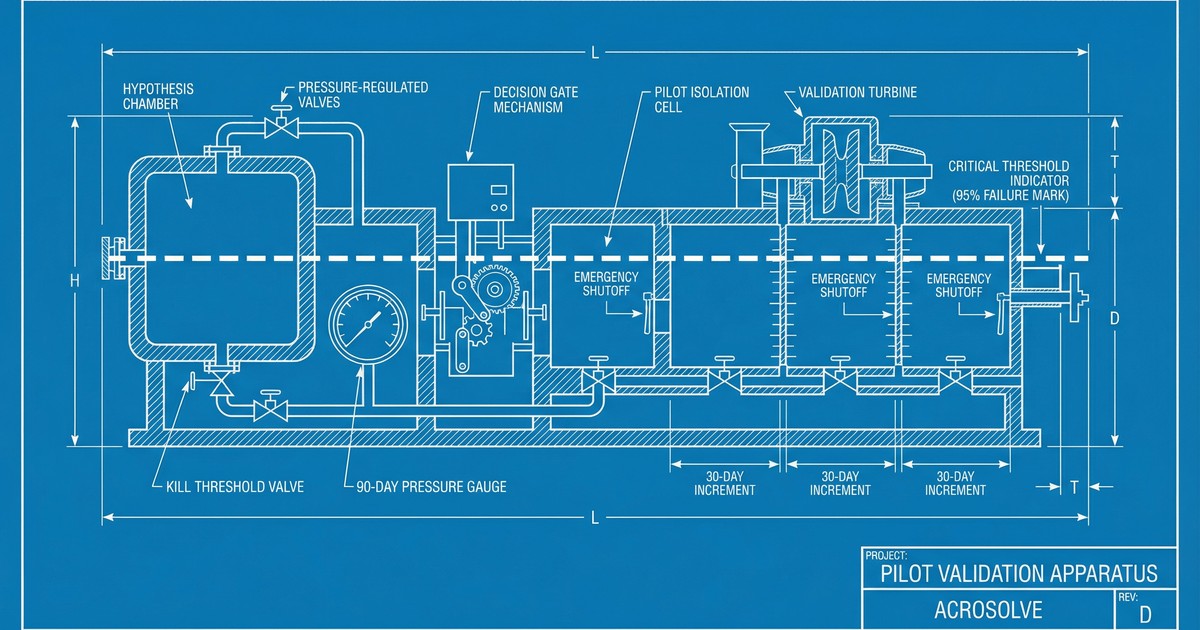

2. A hypothesis with a kill threshold

Write this down before you start:

“We believe that [specific AI solution] for [specific team] will [specific outcome] by [specific percentage], measurable within [specific timeframe].”

Then define two thresholds:

- Success threshold: The minimum result that justifies scaling. “If we hit 50% reduction in processing time, we expand to the full team.”

- Failure threshold: The result that kills the project. “If we are below 20% reduction by day 60, we stop.”

The 88% failure rate for AI pilots that never reach production is largely attributable to not defining these criteria upfront. Without a kill threshold, bad pilots never die. They just drift into pilot purgatory. 56% of organizations are stuck there right now.

3. A 90-day timeline

The consensus across practitioners and researchers is that 90 days is the right duration for an AI pilot. Shorter than that does not give the team time to adapt. Longer than that loses momentum and starts to feel like a permanent experiment.

Structure it in three phases:

Weeks 1-2: Tool selection, data preparation, and team training. Get the infrastructure running and make sure the people involved understand what they are testing and why. If you are unsure whether you need a simple prompt, a knowledge base, or a custom model, this decision framework helps you pick the right approach before committing budget.

Weeks 3-8: Active pilot. The team uses the AI tool in their real workflow with real client work. Track metrics weekly. Address issues immediately.

Weeks 9-12: Measurement, analysis, and decision. Compare results against your hypothesis. Run the decision gate.

4. A realistic budget

For a service business, a well-scoped AI pilot costs €3,000-15,000. That covers tool subscriptions, setup and integration, and the team’s time investment during the pilot period.

That is not a guess. The ENDKOO study of 200 European B2B deployments found that this budget range consistently outperformed larger investments on ROI. The constraint forces you to pick a problem that can actually be solved within those bounds, which is exactly the kind of problem most likely to succeed.

For smaller teams under 50 people, the lower end of that range is typical. A single workflow pilot with off-the-shelf AI tools and a clear scope. For larger service businesses with more complex processes and more stakeholders, budget closer to €10,000-15,000 to account for integration work and broader team involvement.

5. A small team with one owner

For a business under 50 people, the pilot team might be 3-5 people, or even just one person running a focused experiment. For larger service businesses, 5-15 people is the right pilot size. Either way: one named person owns the outcome, and one decision-maker can approve the scale decision.

Assign dedicated time during the active phase. If nobody has time carved out for this, the pilot will not get the attention it needs, and the results will reflect that.

6. A weekly operating cadence

Check in weekly during the active phase. Not a status meeting. An operating review:

- What is the AI producing? Show real examples.

- What corrections is the team making? Log every one.

- Are the metrics moving in the right direction?

Monthly, translate the operational metrics into financial impact. Hours saved times hourly cost equals euro value. Keep the math simple and honest.

7. A day-90 decision gate

On day 90, there are exactly three options:

Scale: The pilot hit or exceeded the success threshold. Document what worked, train 2-3 internal champions, and roll out to the full team over the next 30 days.

Extend: Results are promising but inconclusive. Run one more 90-day cycle with adjusted parameters. Only extend once. If a second cycle does not hit the threshold, kill it.

Kill: Results are below the failure threshold. Stop. Document what you learned. Move to the next use case.

This is the discipline most organizations lack. The default tendency is to extend indefinitely, to tweak and adjust and run “just one more month.” That is how pilots become purgatory.

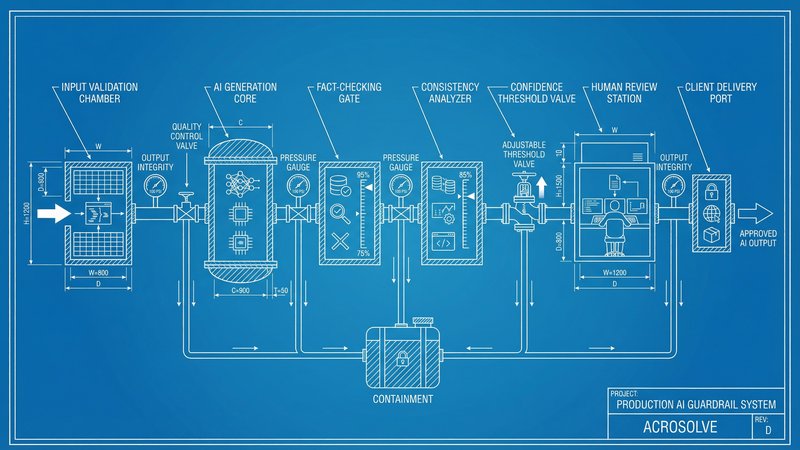

The Scaling Path

A successful pilot is not the end. It is the proof that unlocks the next step.

After a pilot hits the success threshold:

- Document everything. The process, the prompts, the tools, the edge cases. What worked, what did not. This becomes the playbook for scaling.

- Train internal champions. For a small team, that might be one person who becomes the go-to. For a larger organization, one champion per 8-10 team members. These are the people who answer questions and demonstrate the tool to skeptics.

- Roll out to the full team over 30 days. Not overnight. Gradual adoption with support available.

- Only then consider adjacent use cases. One at a time. Each new use case gets its own pilot before it gets budget.

The full implementation comes after two or more successful pilots prove the pattern works for your organization. Not before.

The Change Management Reality

The reason pilots work better than roadmaps is not just financial. It is psychological.

Nearly half of CEOs report that employees were resistant or hostile to AI-driven changes. 53% of AI users fear it makes them replaceable. 48% are uncomfortable admitting to their managers that they use AI. Mandating AI adoption through a top-down roadmap runs directly into this resistance.

We see this in our conversations too. A prospect told us their team was open to AI for routine tasks, but current AI suggestions still needed significant human involvement to be usable. The concern was not about the technology. It was about losing control of the output quality.

Pilots sidestep the resistance. A small team volunteers to try something new. They see results. They tell their colleagues. The colleagues get curious. Adoption spreads through demonstrated value, not management decree.

McKinsey’s guidance is concrete: for every €1 spent on AI development, plan €3 on change management. Companies with a clear people strategy are 2.6 times more likely to succeed with AI. The pilot model naturally embeds change management because the team’s own experience is the proof.

When You Do Need a Roadmap

There are situations where a broader strategic view is necessary. If your data infrastructure is so fragmented that no pilot can access clean data, you need to address that first. If regulatory requirements demand enterprise-wide compliance changes, those cannot be piloted incrementally.

But even in these cases, the execution should be phased. BCG and McKinsey both recommend piloting within the transformation rather than running a waterfall-style big-bang deployment. The roadmap defines the destination. Pilots determine the route.

Where to Start This Week

The math is simple. A €3,000-15,000 pilot with a 90-day timeline and a clear kill threshold costs less than most AI strategy consultancies charge for the assessment alone. If the pilot fails, you learned something specific for a fixed cost. If it succeeds, you have proof that justifies the next investment.

Three steps:

-

Pick the pain point. What task does your team spend the most time on that follows a repeatable pattern? Proposal writing, data entry, report generation, client follow-ups. Pick the one with the clearest before-and-after metric.

-

Write the hypothesis. “We believe [tool] will reduce [task] time from [X hours] to [Y hours] for [team], measurable in 90 days.” Define the success and failure thresholds before you start.

-

Set the decision date. Put a day-90 meeting on the calendar now. Scale, extend, or kill. No other options.

The 95% failure rate is not about AI failing. It is about organizations trying to transform everything at once instead of proving one thing first.

Ready to identify the right pilot for your business? Take the AI Readiness Assessment and we will pinpoint the workflow with the highest probability of a successful first pilot.

Thom Hordijk

Founder

Get posts like this in your inbox every week

Weekly insights on AI and automation for B2B service businesses. No hype, just what works.

Related Articles

View all articles

90% of Businesses Don't Use AI. That's the Opportunity.

90% of US businesses still don't use AI in production. The gap between capability and deployment is where the real opportunity lives for service businesses.

The Dark Side of Vibe Coding for Businesses

One year after the birth of vibe coding, the security incidents are adding up. Learn why speed without safety puts your business data at risk.

AI Reliability: The Missing Piece in Production Deployment

Only 31% of AI use cases reach production. The gap is not capability, it is reliability. Here is how to build AI systems your team and clients can actually trust.