RAG, Fine-Tuning, and Prompt Engineering: Which One Do You Actually Need?

Most businesses overspend on AI customization they do not need. Here is a practical decision framework for choosing between prompt engineering, RAG, and fine-tuning.

You Probably Do Not Need What You Think You Need

In nearly every sales conversation we have, the same question comes up in some form: “Do we need to train a custom AI model for this?”

The short answer, for almost every service business we talk to, is no.

A creative agency spending hours on manual proposals for every new client. A consultancy whose CRM only captures warm leads because nobody has time to enter the rest. A services firm whose AI-generated outreach emails still need heavy editing because the tone is off. These are real conversations we have had with businesses exploring AI. And in every case, the solution was simpler and cheaper than what they expected.

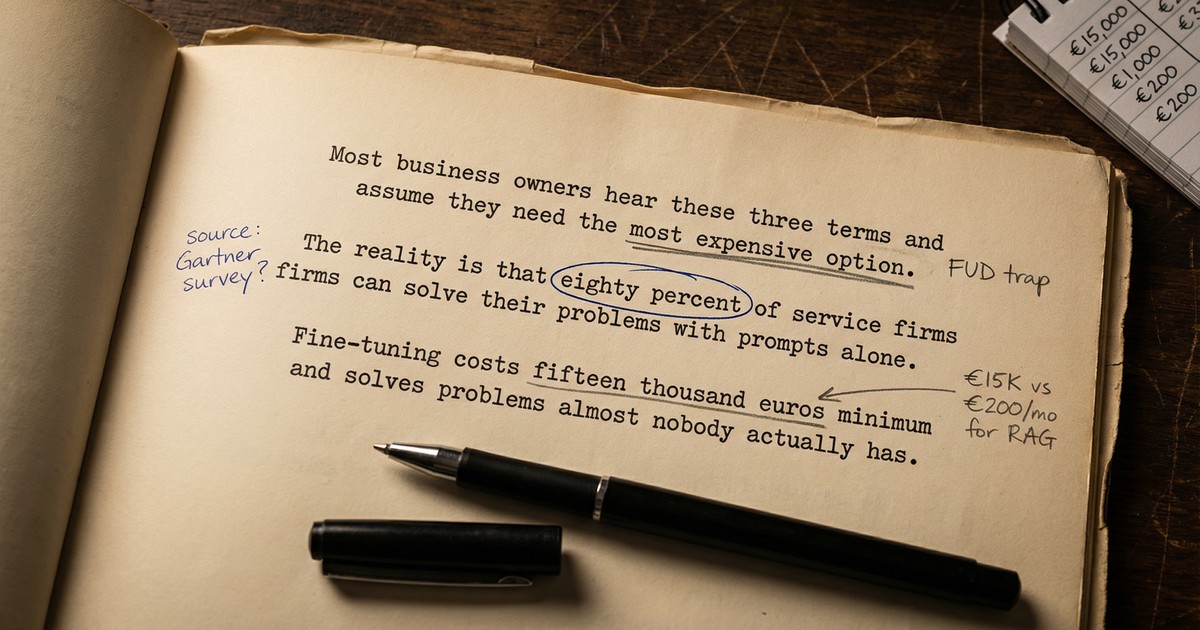

73% of companies invest in AI customization approaches they do not need. The vendor ecosystem has every incentive to sell you the most complex solution. More infrastructure means more revenue for them. But for most service businesses, the simplest approach is almost always the right one.

Three terms keep coming up: prompt engineering, RAG, and fine-tuning. You have probably heard all three. You may have been told you need all three. Here is what they actually mean and which one fits your situation.

What These Terms Actually Mean

Forget the technical definitions. Think of it this way.

Prompt engineering is giving clear instructions. You tell the AI what you want, show it examples of good work, and structure your request so it understands the context. No new tools. No infrastructure. Just better communication with the AI you already have access to.

When a creative agency we spoke with wanted to automate proposal generation, the first thing we tested was a well-structured prompt that included their intake form data, brand guidelines, and two examples of past proposals. That single prompt got them 80% of the way there in an afternoon.

RAG (Retrieval-Augmented Generation) is giving the AI a filing cabinet. You connect it to your company documents, client histories, SOPs, or product information. When someone asks the AI a question, the system searches your files first, pulls out the relevant parts, and hands those to the AI alongside the question. The AI answers using your actual data instead of making things up.

One of the recurring problems we see: a business has information scattered across a CRM, a project management tool, email threads, and shared drives. Nobody has a full picture of any client. RAG solves this by letting the AI search across all of it.

Fine-tuning is retraining the AI itself. You feed the model hundreds or thousands of examples until it internalizes your specific style, format, or domain patterns. The result is a custom model. It costs more, takes longer, and requires technical expertise that most service businesses do not have in-house.

If you are new to how these AI models work under the hood, our explainer on LLMs covers the basics.

Here is the critical point: these are not levels on a ladder. Fine-tuning is not the “advanced” version of prompt engineering. They solve different problems. Choosing the wrong one wastes money. Choosing the right one saves it.

Prompt Engineering: Start Here, Seriously

For most service businesses we work with, prompt engineering solves the problem. Not as a temporary fix. As the actual solution.

It works when the AI already has the general knowledge to do the task: writing emails, summarizing meeting notes, drafting proposals from a brief, creating outreach messages, structuring reports. The AI knows how to do all of this. It just needs clear instructions about how you want it done for your business.

We had a conversation with a company whose AI-generated client content still needed heavy editing for personal touches and brand specifics. The AI was producing generic output because it was getting generic instructions. Once we structured the prompt with the brand’s voice guidelines, specific client context, and examples of approved output, the editing time dropped significantly.

Modern AI tools have massive context windows. Claude can process 200,000 tokens in a single prompt, roughly 150,000 words. That means you can paste in a full client brief, your company’s tone of voice guidelines, three examples of past work, and detailed instructions, all at once. No special infrastructure required.

What it costs: Nothing beyond your existing AI subscription. A ChatGPT Plus or Claude Pro plan runs €20/month. Implementation takes hours.

Where it falls short: The AI only knows what you put in the prompt plus what it learned during training. It does not know your client history, internal pricing, or proprietary processes unless you paste them in every time. For a business with 5 clients, that is manageable. For 50 or 200, it is not.

Use prompt engineering when:

- The task uses general knowledge the AI already has

- You can include all the necessary context in a single conversation

- You are still figuring out whether AI can handle this task at all

- “Good enough with a quick review” is acceptable

If you have not tried this seriously, do not move to anything else yet. A well-crafted prompt with real examples consistently outperforms a poorly set up RAG system or a fine-tuned model trained on weak data. For a deeper dive into what makes prompts effective, see our practical guide to prompt engineering.

RAG: When the AI Needs to Know Your Business

RAG becomes necessary when the AI needs your company’s specific information, and there is too much of it to paste into every prompt.

This is the situation we see most often. A services firm has dozens of client accounts. Each has a history of engagements, contracts, preferences, and communication. When the AI drafts a proposal for a specific client, it needs that client’s actual history, not a generic template.

Or consider project management. One prospect told us their biggest headache was information scattered across Monday.com, email, and spreadsheets. Nobody had the full picture. Leads fell through the cracks because the CRM only captured warm prospects, while everything else lived in someone’s inbox. RAG connects the AI to all of those sources so it can pull the right information on demand.

The numbers back this up. 71% of early AI adopters in business have implemented RAG. A European bank automated audit processes and saved €20 million over three years. These are not experiments. They are production systems.

RAG has also gotten noticeably better in the past year. Hybrid search, combining keyword matching with semantic AI search, has improved retrieval quality by up to 48%. That means the AI finds the right document more often, even when the user’s question does not match the exact wording in the files.

What it costs: Vector databases like Pinecone start free, with production plans from around €45/month. Total RAG infrastructure for an SMB typically runs €60-900/month depending on data volume. Setup takes days to a few weeks.

Where it falls short: RAG only works as well as the documents behind it. If your SOPs are outdated, your client records are inconsistent, or your files are disorganized, the AI will confidently give wrong answers based on bad source material. Getting your documents in order is often the real first step.

Use RAG when:

- The AI needs access to your company-specific knowledge

- That knowledge is too large to paste into every prompt

- The information changes regularly (pricing, client details, processes)

- Accuracy matters, the AI cannot be guessing

You do not need RAG when:

- The task only requires general knowledge

- Your context fits comfortably in a single prompt

- You are still testing whether AI can even do this task

RAG’s total cost of ownership is 10 to 50 times lower than fine-tuning. If you are debating between the two, start with RAG.

Fine-Tuning: You Probably Do Not Need This

Fine-tuning is what most people picture when they think of “custom AI.” It is also what vendors are most eager to sell. And it is the approach that service businesses almost never need.

Fine-tuning trains the model on your examples until it internalizes specific patterns. The result is a model that writes in your style, follows your format, or handles your domain without being told every time. JPMorgan used it to save 360,000 legal review hours annually. E-commerce companies with thousands of product descriptions report 60% faster content production.

But notice the scale. JPMorgan processes millions of documents. E-commerce companies generate thousands of listings per month. If your business produces 10 proposals a month or handles 50 client emails a day, fine-tuning is like buying a container ship to cross a canal.

There is a deeper issue most people miss: fine-tuning changes how the AI behaves, not what it knows. It is behavioral alignment, not fact storage. If you fine-tune a model with your company’s knowledge and that knowledge changes next quarter, the model will confidently produce outdated information. It does not know its facts are stale.

The cost has come down with techniques like LoRA and QLoRA. What used to require €45,000 in GPU time can now be done for €1,000-3,000. But data preparation, collecting and cleaning those 1,000+ training examples, adds 20-40% to the total. And you need to retrain every time your requirements change.

What it costs: €1,000-11,000 per training cycle, plus data preparation. Ongoing retraining as your business evolves. Requires technical expertise or a specialized vendor.

Fine-tuning makes sense when:

- You have 1,000+ examples of exactly what good output looks like

- The task is high-volume and repeatable: thousands of identical outputs per month

- Prompt engineering and RAG genuinely cannot get the style or format right

- The underlying knowledge is stable and does not change often

- The ROI clearly justifies the investment

For most service businesses, fine-tuning does not make sense. Not because it is bad technology. Because prompt engineering and RAG handle the vast majority of real use cases at a fraction of the cost and complexity.

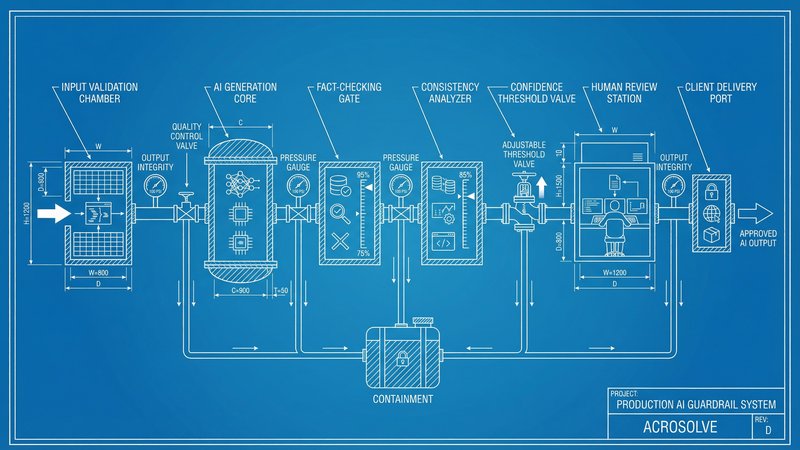

How We Think About This at Acrosolve

Internally, we use both prompt engineering and RAG to run our own operations. Our content pipeline, proposal generation, lead management, and client research all run on structured prompts connected to our knowledge base through RAG. We do not use fine-tuning. We have never needed to.

For clients, we typically recommend starting with prompt engineering. It is the fastest way to prove whether AI can handle a specific workflow, and it often turns out to be the complete solution. Proposal automation, outreach emails, meeting follow-ups, report generation. For most of these, a well-structured prompt with the right context gets the job done.

RAG comes into play when a client has a clear use case from the start: a large document library the AI needs to search, a knowledge base that changes regularly, or client-specific information spread across multiple systems. When a consulting firm needed their AI to reference past engagements and contracts, RAG was the obvious first step. But when a creative agency wanted to automate proposals, prompt engineering alone got them 80% there in an afternoon.

The pattern we see across company sizes is consistent:

For service businesses under 50 people, prompt engineering handles the majority of use cases. The knowledge base is small enough to manage within prompts, the volume is moderate, and the team benefits most from speed and simplicity. RAG adds value when client-specific data starts to pile up or when information is scattered across too many tools.

For larger service businesses, RAG becomes more of a default requirement. More clients means more data the AI needs to reference. More team members means more consistency needed across outputs. More processes means more SOPs the AI needs to follow. These organizations typically need prompt engineering and RAG working together from the start.

Fine-tuning remains rare across both. The threshold, over 1,000 training examples, thousands of identical outputs monthly, stable knowledge that does not change, is one that even larger service businesses seldom reach. It belongs to enterprises processing at industrial scale.

The Decision in Three Questions

Before spending anything on AI customization, ask these in order.

1. Can a well-written prompt solve this?

Write a detailed prompt with your brand voice, 2-3 examples of ideal output, and the relevant context. Test it with real scenarios. This takes a day and costs nothing. You may be done.

2. Does the AI need your company’s specific knowledge?

If the task requires client history, internal SOPs, product details, or any proprietary information that is too large to paste every time, you need RAG. Budget €60-900/month. Setup takes days to weeks.

3. After trying both, is it still not good enough?

Is the model failing on style, tone, or format even with good prompts and good data? Do you have over 1,000 labeled examples? Is the volume high enough to justify €1,000+ per training cycle? Can you prove the ROI?

If yes to all of those, consider fine-tuning. If no to any of them, you are not ready for it yet.

The order matters. Every euro spent on fine-tuning before you have exhausted prompt engineering and RAG is almost certainly wasted.

Where to Start This Week

-

Pick your most painful recurring task. The one that eats hours every week: proposals, follow-up emails, reports, data entry. Open ChatGPT or Claude, write a proper prompt with context and examples, and test it. See how far you get before investing in anything else.

-

If you hit the knowledge wall, where the AI needs information it does not have, that is your signal to explore RAG. Start with a small subset of your documents. Validate the approach before committing to infrastructure.

-

Ignore fine-tuning until prompt engineering and RAG have clearly failed. For 95% of service businesses, they will not.

The right approach is the simplest one that works. For smaller teams, that usually means prompt engineering handles the bulk of it. For larger service businesses with more clients and more data, prompt engineering and RAG working together is the standard. Fine-tuning stays reserved for industrial-scale operations that most service businesses will never reach.

Want to figure out which approach fits your workflows? Take the AI Readiness Assessment and we will map your use cases to the right level of AI customization. No unnecessary complexity, no overselling.

Thom Hordijk

Founder

Get posts like this in your inbox every week

Weekly insights on AI and automation for B2B service businesses. No hype, just what works.

Related Articles

View all articles

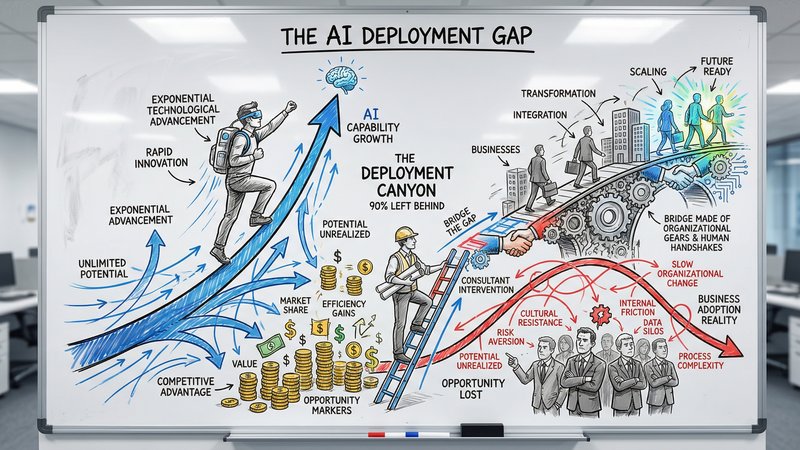

90% of Businesses Don't Use AI. That's the Opportunity.

90% of US businesses still don't use AI in production. The gap between capability and deployment is where the real opportunity lives for service businesses.

The Dark Side of Vibe Coding for Businesses

One year after the birth of vibe coding, the security incidents are adding up. Learn why speed without safety puts your business data at risk.

AI Reliability: The Missing Piece in Production Deployment

Only 31% of AI use cases reach production. The gap is not capability, it is reliability. Here is how to build AI systems your team and clients can actually trust.